Again, unless you're dealing with a couple of orders per day only, you'll most likely have your orders processed in an ERP and inventory synchronized automatically. Shipments and payments need to be processed and you might want to import you initial customer base from your previous platform or synchronize it with an external CRM system. The number of possible integrations is endless.

There are tons of solutions out there focusing on specific scenarios. The most popular scenario is probably product imports. Some of the solutions are generic, others or specific to the system they're integrating with, others again implement a very specific protocol with a third-party product.

In any cases there are many things to be considered while planning any kind of integration, incoming or outgoing.

While this post will not focus on implementation details of a specific solution I rather want to outline important things to consider while planning any kind of integrations and implementing them.

I find myself discussing these things in almost every project and while some of the points are obvious to begin with, others are being neglected in the first place. Later during the implementation questions come up like "Is it us pulling the data from somewhere? Or will they push the data to Magento?" or "Are they always transferring the full product catalog or only daily increments? How will they let us know if a product is deleted?"

Also, in many cases these discussion involve three or more parties: You, the merchant (aka the PO) and the provider of the third-party system. Not to forget that your project manager, the merchant's in-house IT and the third-parties' salesman might also have some opinions on how the integration should work. If things aren't being well-specified soon the finger-pointing will start if things go wrong and deadlines can't be met.

With this post I want to help uncovering some hidden complexities in the discovery phase of a Magento project. Instead of suggesting a technical solution I'm trying to come up with a checklist, a common vocabulary and a simple way to visualize everything in a diagram.

Entities to consider

Integrating Magento into one or more third-party systems is all about data. Exporting it, importing it, transferring and transforming it. But what's the data we're talking about? Besides the obvious like products and orders this is a (still incomplete) list of entities form a e-commerce domain model that might help you to design your integrations.

- products

- attributes

- attribute sets

- attribute groups

- attribute values

- categories

- customers

- customer addresses

- customer groups

- customer login information (username, password)

- tax configuration

- pricing (incl. a ton of fancy variants from tier pricing to group pricing)

- promotion rules

- coupon codes

- order

- order status updates

- order history (might also includes order from a different shop system)

- shipments

- shipping status updates

- invoices

- tracking code

- customer service messages

- customer support request

- returns

- content pages

Also add all custom entities that you're planning on building for that merchant. Talk about every single item, since this might uncover topics that haven't been discussed yet (at that point the merchant might not even be aware of this being something that needs clarification)

Make or Buy?

Luckily there's a third option: "use existing". Some of the solution come with Magento or are available for free.

Ready-to-go solutions

There are a couple of different solutions that can be used without any customization. They might not offer everything you need, but it might be at least a very good start to look at them and to consider extending them instead of building something from scratch:

Magento's standard interfaces

- Magento Web Service API (REST, SOAP) Magento's Web Service API comes in 2 different versions. Both are extensible (although V1 is a lot easier to extend). The API offers basic methods to interact with some of Magento's entity. Check out the documentation for more information: REST / SOAP

- Magento also has another web service API called "XML Connect". There's no real documentation, but reading the code should give you some insight. XML Connect is designed to interact with mobile application. In fact it was created for Magento's mobile app, but nothing stops you from reusing the code for your own purposes.

- Magento Import/Export This allows you to import and export Magento entities using a csv format. It's not super flexible, but in case your integration is simple this might be everything you need.

Third-party Magento modules

There are many modules out there that have solved the basic problem of importing products (and other entities) into Magento for you. While this is not everything you might have to to do integrate your Magento project into third-party system, it is probably a good subset of what needs to be done. Checkout Andreas von Studnitz's slidedeck on "Importing Products into Magento" where he shows some of the most popular modules.Make

In case none of the solutions mentioned above fits your requirements you'll have to start implementing your own APIs/importer. There are a couple of different options to consider on how to get data in and out.- domain model

- resource model

- webservice api classes

- writing directly to the database (this is the fastest, but it's not recommended unless you know exactly what you're doing and if you're willing to test and possibly adjust the code for every Magento version).

Characteristics Checklist

Entity

Let's start with the obvious one: Which entity are we transferring? While this sounds trivial, it's probably not. In many cases you have to come up with a common vocabulary:- What is the role and function of that entity in every system?

- Where does the data originate from?

- Does the entity already exist on both systems? Does it have the exact same meaning?

- Is the entity isolated in both system? Or is it part of another entity (e.g. customer, customer address, custom login information or: product, stock, prices)? Can it be easily separated?

Entity attributes

- What's the entity's unique identifier?

- What other attributes does this entity have?

- Can all attributes be mapped to each other? Are there attributes in Magento that don't have a correspondence in the third-party system? Or vice versa? If so, do we need to create these attribute in the other system? What is it going to do with that data?

Attribute values

- Do common attributes also share the same attribute values?

- How do you define boolean values? 0/1, true/false or even 1/2 (that's a tricky one, but check Mage_Catalog_Model_Product_Status!)

- If there are predefined values (options), can/will they by extended on the fly if a value is missing?

- Will the attribute value contain the value or only an id that stands for the value? What options are allowed?

- What exact data type does every single attribute have?

- Define the cardinality: Is the value optional? Is it required? Are multiple selection possible? What's the maximum number of selections? What's the minimum?

Scope of the data

- Are you using multiple stores in Magento? Or websites?

- Is there a corresponding concept in the third-party system?

- How do you map incoming/outgoing data from Magento stores to the other concept?

- Is there an implicit fallback mechanism for any data? Is there a default value?

- What are the different stores used for? Addressing different markets? Different languages? Different currencies?

- Is it possible that entities are present in one context, but missing in another? If so, it is good enough, if the entity is present, but disabled in a context where it shouldn't be?

- Which attributes a scope specific? Which aren't? Is the unique identifier the same one across scopes?

Master system

- Which system is the master system?

- Can data be created in a non-master system? Or are there two master systems?

- If so, how will data be merged back?

- How will conflicts be handled?

- What happens if inconsistent data is detected?

Transfer

Direction

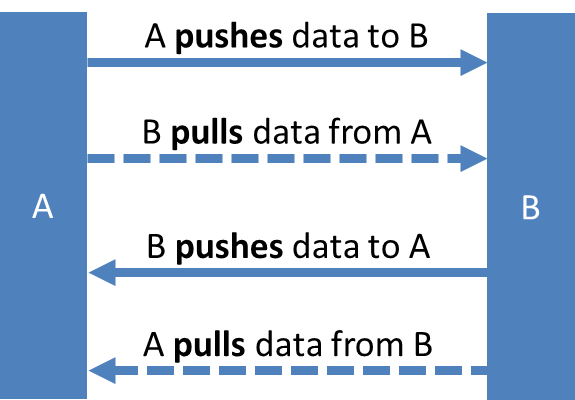

- In which direction is the data flowing? From Magento to the third-party system? Or vice versa?

- Is the system where the data originates from also the master system for the whole entity?

Active party (push/pull?)

- Which system is the active party? Note, that this is not necessarily related to the data flow direction. Magento could push data to the third-party system or the third-party system could pull the data from Magento. Both scenarios would result in data flowing from Magento to the third-party system.

Protocol/Storage

- What protocol is being used to transfer the data? (FTP, HTTP, email,...?)

- Is there a storage medium in between that acts like a hand-off place? (e.g. one system drops the files on an FTP server and the other one picks them up from there...)

- If that's the case, how will the receiving system keep track of what file has already been processed? (Will it archive the file after processing? What happens if the process dies half-way?)

Responses

- How will the receiving party let the sending party know that the data was processed correctly (or if not, then what was wrong?).

- Will a simple HTTP status do the trick? What if the data is not processed in the same request it is received? Or if it is not received via HTTP?

- Does there need to be another communication channel and/or data format to communicate the successful or erroneous outcome of a data transfer?

Security

- Is sensitive data transferred (that's probably true for every single communication channel)?

- How does the receiving party verify the authenticy of the sender and the data?

- Is encryption only required on the protocol level (HTTPS, SSH,...) or does the data also need to be encrypted separately?

Authentication

- How is authentication being handled?

- Is there a separate login call that returns a token that needs to be passed to any subsequent request?

- Or are credentials being passed via HTTP headers?

- Is authentication being handled within HTTP or within the application?

- Or is it a simply IP restriction only?

- Which party manages the credentials?

Volume

- What's the number of expected records per import? Hundreds? Thousands? Millions?

- Will your importer be able to load the entire file into memory to process it? Or do you need to traverse the file without loading it (e.g. using XML Reader?)

- Will you have to start processing data in parallel (multiple threads) to save time?

- Will your current importer be able to handle the volume in an acceptable amount of time?

API Workflow

- Is every API call isolated or are there multiple calls that are connected?

- Do I need to get a login token first?

- Is the data paginated?

Timing

- Is the data transferred synchronously or asynchronously? A synchronous data transfer would be required if you need the result of the operation within the request workflow. Synchronous integrations (and integrations that are exposed to the client - even if that's only through AJAX but still part of the frontend workflow, e.g. checkout) should be avoided if possible.

- Is there any expectation for (near) real-time data transfer?

- Is a delay acceptable? What's the maximum accepted delay for each entity? (e.g. less then 5 minutes before order confirmation email gets send)

- Or is the delay even something you want to have in place (e.g. holding orders back for an hour before they're transferred to the ERP)

- Is the data processed right away after it's being sent to the receiving system or is it being queued and processed at a later point in time? What delays are expected?

- Is all data equal or is there data that has higher priority and needs to be processed first? (e.g. processing new order vs. newsletter subscriptions)

Trigger

What is triggering new data transers?- Is there a fixed schedule? How often does data need to be transferred? Once per day? Every hour? Every 5 minutes?

- Is the data transfer only triggered manually? Where can this be done? Who should be allowed to do this?

- Is the data transfer event based? (e.g. after an order is being placed, a new customer signed up, or during indexing?)

- Is every event being processed individually or are they being grouped and processed in bulk? Maybe after a certain number of events were collected? Or based on a schedule?

- Can automated transfers (scheduled or triggered by event) be triggered manually in case an transfer failed and needs to be retried?

Error handling / success confirmation

- What happens if an error occurs? Will the current import stop? Or will the the current item only be skipped?

- Are there any relations to future data in the same import that might implicitly fail because an item has been skipped (e.g. parent product - child products?)

- How will we let the other system know? How will our system find out if something went wrong with our data?

- What happens then? Will the system retry automatically? Or only if a given class of errors (e.g. temporary communication errors) has been detected?

- Will there be any notification? Who needs to be notified? How often? Via email? Text?

- Do we also need to confirm successful data transfers and imports? What's the protocol here?

Transactions?

- Do imports need to be handled inside a transaction? Is it important that everything gets processed successfully (or nothing?)

- Can this be solved on the database layer? Or do we need to handle this on the application layer? How do we rollback half-way transactions?

- Does imported data have an immediate effect on the system? Or is it only prepared and needs to be committed later? (e.g. SOLR)

Integrity

- Do write operation need to be atomic?

- Will there be situations where two or more processes try to update the same data in parallel?

Data

- Who designs the protocol? Us or them?

- How is the protocol specified?

- Is it possible to define a validation schema? (e.g. XSD?)

- Can syntax and semantics be tested automatically?

- Is any preprocessing required (e.g. normalizing usernames, address verification, special characters on shipping labels)

Character set

- What character set does the systems require? (Inbound and outbound)

- What line ending are expected?

Format

- What's the data format? (e.g. XML, JSON, CSV, fixed width,...)

- Has this been used before or is this something we're creating for this project? Data format (xml, csv, fixed width,...). (Ideally including xml schemas)

- For CSV files: define line separators, column separators, how to quote, how to escape and how to deal with multi-line values.

- Does the data need to be human readable? Do we need to format it nicely (e.g indentation for XML files,...)

Compression / Bundling

- Are we transferring plain files or is the data compressed? (e.g gzip, zip)

- Are the files transferred individually or in a bundle (e.g. tar)

Completeness

- Does the data transfer always contain all available data for that entity? Or is it only a delta export? Or are individual records of group of records transferred?

- In case of delta transfers, will there be regular syncs with the full data to make sure that nothing got lost in between?

- For full data transfers, are records that are not part of the new data implicitly to be deleted? How will you handle this? Will you have to flush the whole dataset and then import the new data (resulting in a time-span where no or incomplete data is present in the system)? Or will you try to "remember" the data you touched and after the import delete everything you didn't touch? (This works fine but requires you to update data even is the record's content didn't change, which will slow down the import process.)

- Will a record replace an existing one on import or will the new data be merged on top of the existing one?

- Are there optional fields in the data? How do we handle the absence of an optional value in case the record we're about to overwrite already has data in that field?

- Will there be fields that can not be overwritten by an incoming record?

Deleting records

- Does the format specify a way how to delete individual records?

- If a record is deleted, should it actually be totally removed from the database? Or should it only marked as deleted allowing to re-enable it if it will come back in a future data import?

Staging

- Are new imported entities (or updated ones) active by default right after importing or is activating them a separate process? (E.g. triggered manually after reviewing the data)

- Does the content get enhanced after import? (e.g. CMS content, marketing information,...)

Exporting data

- For entities that flow out of Magento: Is the receiving system mapping all the data or does the exporter prepare the data in the right format?

- Is data provided in a raw format or does there need to be any pre-processing (e.g. formatting prices, rendering templates?)

- Is the data needed in the third-party structured the same way as in Magento or does Magento need to compose the information after retrieving records from different sources

- Will any data be aggregated for export?

System

- Which exact component of the system is doing or receiving the request? (E.g. REST Api, cli, ...)

- Which component of the system is delivering the data?

- Is there another middleware that does any preprocessing or dispatching?

Sharding

- Is data for the same entity split into different third-party systems? For example one Magento instance comes with different websites for US and Europe. Orders and inventory are handled by different systems in the two regions. Is there a single endpoint that dispatches the requests based on the store (e.g. website id)? Or does Magento need to keep track of multiple integrations that might also be different solutions?

Infrastructure

Look at all the endpoints in the infrastructure:- Is it a single server or a group of multiple servers?

- Are the load balanced? What happens if a server goes down? Will another one be able to pick up the session or is the session invalid and that (multi-step) data transfer needs to be restarted from scratch?)

- Is there a proxy in front of the endpoints?

- Is there any caching? Are caches being purged correctly?

Availability and Reliability

- What's the expected requests/sec that every component will be able to handle?

- Is there a problem with a massive amount of data (even if queuing up is OK, but will every component be able to handle this?)

- What's the expected response time?

- What happens if one of the components is down? Is the rest of the infrastructure decoupled, so that (at least) the most critical processes (e.g. placing orders,...) will continue to work?

- Is there a clear process and responsible person for every component in case something goes wrong?

- What SLAs have been discussed?

- Is it OK to include the third-party systems into stress tests?

Optimization patterns

There are a number of things that can be done to increase the performance of any integration. Here's a pattern that might be useful to detect if an entity needs to be updated or not: Create a hash (e.g. md5) of the raw incoming data (e.g. the raw xml string) and store this along with the data in an extra field (e.g. a new product attribute). When importing new records first check if the hash of the new data set matches the data that's already in the database. This should be a fast operation. And in case this entity is updated outside of the import process the hash field needs to be reset.Testing

Testing is a crucial (and often neglected) part of any development process. This also - and especially - includes implementing interfaces for other systems to consume and writing code that interacts with other interfaces. Make sure you have a strong set of integration tests in place that constantly check- if your interfaces behave like expected/specified

- if the third-party systems behave like expected/specified

- if incoming data meets the specification (validate data with xsd, do integrity tests for every data point if possible...)

- if generated outgoing data meets the specification.

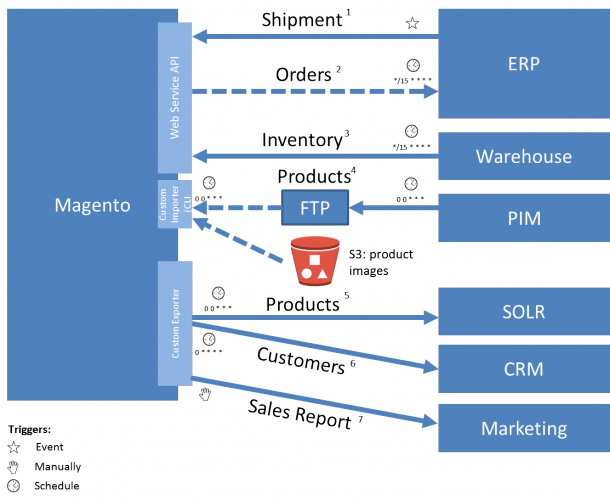

Visualization

One of the most important things in designing integrations is visualization. There doesn't seem to be any standard on how to visualize this and it's certainly not up to me to define one, but here's what has worked for me. And maybe you'll find this useful and will adopt this to visualize your architecture while discussing it with the team and the client:Boxes and lines

Drawing boxes and lines between them is basically what everybody does to visualize that two parties are connected. But I think this needs to be refined a little more: A line should- show the direction of the data flow (this is where the arrow points to)

- and should show if the sending or the receiving party is the active party here (push/pull)

More Detail

Besides that the visualization should/could contain even more information:- Instead of showing the systems also show the interfaces being used. These could be native ones, extended ones or new ones

- The color of the lines could stand for different file formats (json, xml, csv,...)

- Add information on what's triggering the data transfer to every arrows (event, manual, schedule)

- Use different color schemes to show the responsible team for every system and/or interface (e.g. your team, merchant's inhouse team, third-party vendor,...)

Tabular Boilerplate

The visualization alone doesn't carry all information for a clear specification. Instead add a number to every arrow and specify every connection in detail. Go back to the points mentioned earlier in this blog post to identify what's important for this connection. Define a tabular boilerplate/template and use this to specify every connection in detail. Example boilerplate:- System A: Magento

- Interface A: Custom module: ...

- System B: CRM

- Interface B: Native Webservice API, method: ...

- Active Party: Magento

- Trigger: a new customer signs up (event)

- Frequency: ~100/day

- Content: Individual custom records/

- Format: XML (provide more information with exact field mappings, XSD, character set,... and an example file)

- On Success: CRM response has a status code 200

- On Failure: CRM response has a status code 4xx or 5xx and

- Action on failure: Retry up to 3 times after 10 minutes each. If it still fails a notification email will be sent to ...

- ...

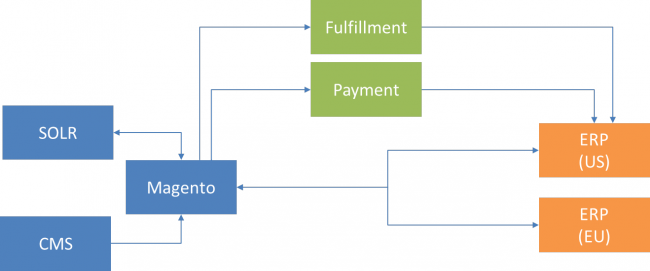

High Level Architecture

In case of an architecture that consists of many different components that interact with each other and that are developed and maintained it's probably useful to come up with a bird's view diagram of every component: